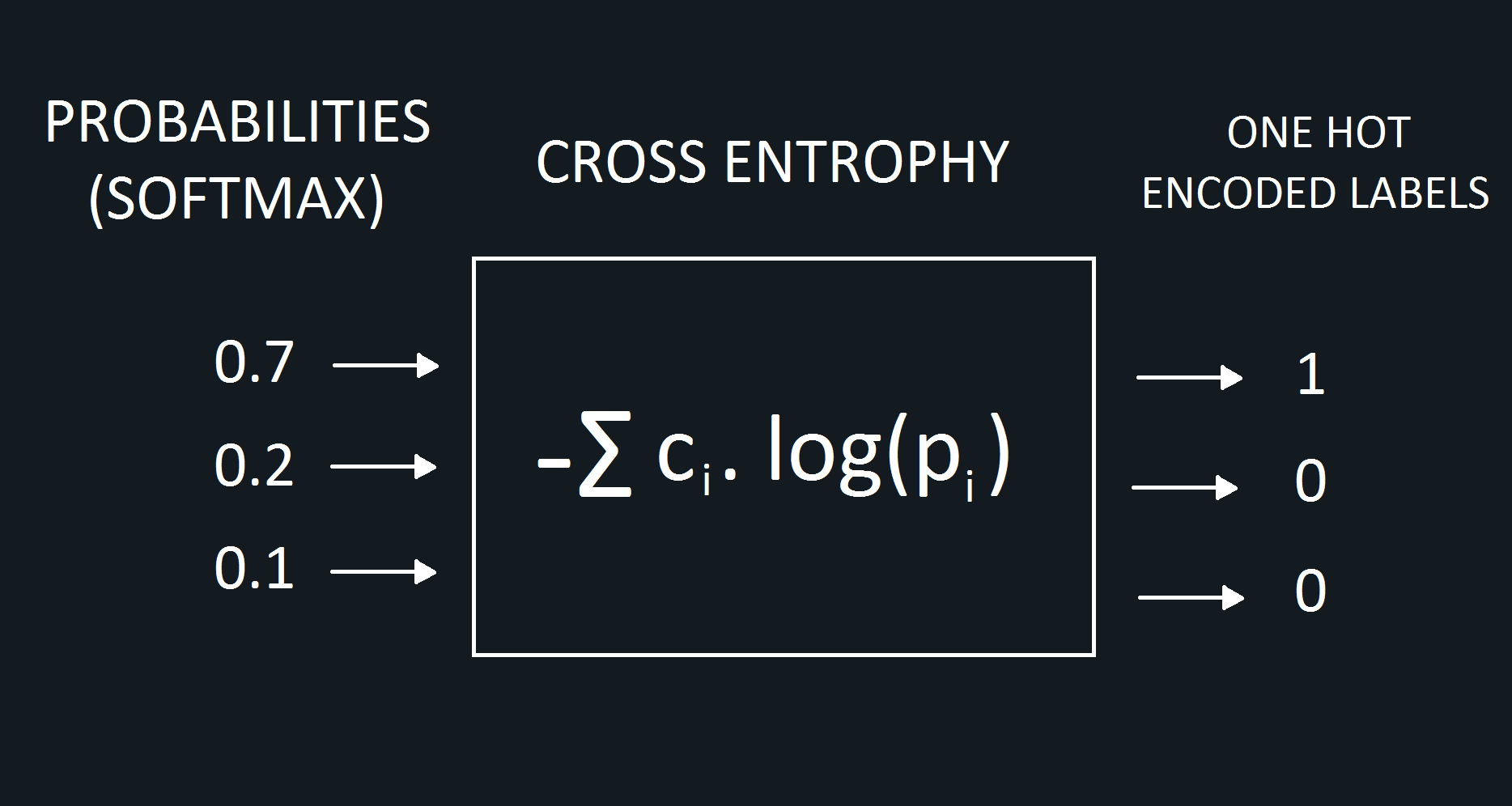

Log Loss and Cross Entropy Calculate the Same ThingĬross-entropy is a measure of the difference between two probability distributions for a given random variable or set of events.Log Loss is the Negative Log Likelihood.Intuition for Cross-Entropy on Predicted Probabilities.Calculate Cross-Entropy Between Class Labels and Probabilities.Calculate Cross-Entropy Using KL Divergence.